Hello everyone!

With new mobile OS versions releasing late this year, we recommend preparing your React Native apps beforehand to avoid regressions when the releases become generally available.

Hello everyone!

With new mobile OS versions releasing late this year, we recommend preparing your React Native apps beforehand to avoid regressions when the releases become generally available.

For a long time now, Apple has discouraged using UIWebViews in favor of WKWebView. In iOS 12, which will be released in the upcoming months, UIWebViews will be formally deprecated. React Native's iOS WebView implementation relies heavily on the UIWebView class. Therefore, in light of these developments, we've built a new native iOS backend to the WebView React Native component that uses WKWebView.

The tail end of these changes were landed in this commit, and will become available in the 0.57 release.

To opt into this new implementation, please use the useWebKit prop:

<WebView

useWebKit={true}

source={{url: 'https://www.google.com'}}

/>

UIWebView had no legitimate way to facilitate communication between the JavaScript running in the WebView, and React Native. When messages were sent from the WebView, we relied on a hack to deliver them to React Native. Succinctly, we encoded the message data into a url with a special scheme, and navigated the WebView to it. On the native side, we intercepted and cancelled this navigation, parsed the data from the url, and finally called into React Native. This implementation was error prone and insecure. I'm glad to announce that we've leveraged WKWebView features to completely replace it.

Other benefits of WKWebView over UIWebView include faster JavaScript execution, and a multi-process architecture. Please see this 2014 WWDC for more details.

If your components use the following props, then you may experience problems when switching to WKWebView. For the time being, we suggest that you avoid using these props:

Inconsistent behavior:

automaticallyAdjustContentInsets and contentInsets (commit)

When you add contentInsets to a WKWebView, it doesn't change the WKWebView's viewport. The viewport remains the same size as the frame. With UIWebView, the viewport size actually changes (gets smaller, if the content insets are positive).

backgroundColor (commit)

With the new iOS implementation of WebView, there's a chance that your background color will flicker into view if you use this property. Furthermore, WKWebView renders transparent backgrounds differently from UIWebview. Please look at the commit description for more details.

Not supported:

scalesPageToFit (commit)

WKWebView didn't support the scalesPageToFit prop, so we couldn't implement this on the WebView React Native component.

As technology advances and mobile apps become increasingly important to everyday life, the necessity of creating accessible applications has likewise grown in importance.

React Native's limited Accessibility API has always been a huge pain point for developers, so we've made a few updates to the Accessibility API to make it easier to create inclusive mobile applications.

accessibilityComponentType and accessibilityTraits are two properties that are used to tell TalkBack on Android and VoiceOver on iOS what kind of UI element the user is interacting with. The two biggest problems with these properties are that:

accessibilityTraits on iOS allows 17 different values while accessibilityComponentType on Android allows only 4 values. Furthermore, the values for the most part had no overlap. Even the input types for these two properties are different. accessibilityTraits allows either an array of traits to be passed in or a single trait, while accessibilityComponentType allows only a single value.Accessibility Hints help users using TalkBack or VoiceOver understand what will happen when they perform an action on an accessibility element that is not apparent by only the accessibility label. These hints can be turned on and off in the settings panel. Previously, React Native's API did not support accessibility hints at all.

Some users with vision loss use inverted colors on their mobile phones to have greater screen contrast. Apple provided an API for iOS which allows developers to ignore certain views. This way, images and videos aren't distorted when a user has the inverted colors setting on. This API is currently unsupported by React Native.

In order to solve the confusion between accessibilityComponentType and accessibilityTraits, we decided to merge them into a single property. This made sense because they technically had the same intended functionality and by merging them, developers no longer had to worry about platform specific intricacies when building accessibility features.

Background

On iOS, UIAccessibilityTraits is a property that can be set on any NSObject. Each of the 17 traits passed in through the javascript property to native is mapped to a UIAccessibilityTraits element in Objective-C. Traits are each represented by a long int, and every trait that is set is ORed together.

On Android however, AccessibilityComponentType is a concept that was made up by React Native, and doesn't directly map to any properties in Android. Accessibility is handled by an accessibility delegate. Each view has a default accessibility delegate. If you want to customize any accessibility actions, you have to create a new accessibility delegate, override specific methods you want to customize, and then set the accessibility delegate of the view you are handling to be associated with the new delegate. When a developer set AccessibilityComponentType, the native code created a new delegate based off of the component that was passed in, and set the view to have that accessibility delegate.

Changes Made

For our new property, we wanted to create a superset of the two properties. We decided to keep the new property modeled mostly after the existing property accessibilityTraits, since accessibilityTraits has significantly more values. The functionality of Android for these traits would be polyfilled in by modifying the Accessibility Delegate.

There are 17 values of UIAccessibilityTraits that accessibilityTraits on iOS can be set to. However, we didn't include all of them as possible values to our new property. This is because the effect of setting some of these traits is actually not very well known, and many of these values are virtually never used.

The values UIAccessibilityTraits were set to generally took on one of two purposes. They either described a role that UI element had, or they described the state a UI element was in. Most uses of the previous properties we observed usually used one value that represented a role and combined it with either “state selected,” “state disabled,” or both. Therefore, we decided to create two new accessibility properties: accessibilityRole and accessibilityState.

accessibilityRole

The new property, accessibilityRole, is used to tell Talkback or Voiceover the role of a UI Element. This new property can take on one of the following values:

nonebuttonlinksearchimagekeyboardkeytextadjustableheadersummaryimagebuttonThis property only allows one value to be passed in because UI elements generally don't logically take on more than one of these. The exception is image and button, so we've added a role imagebutton that is a combination of both.

accessibilityStates

The new property, accessibilityStates, is used to tell Talkback or Voiceover the state a UI Element is in. This property takes on an Array containing one or both of the following values:

selecteddisabledFor this, we added a new property, accessibilityHint. Setting this property will allow Talkback or Voiceover to recite the hint to users.

accessibilityHint

This property takes in the accessibility hint to be read in the form of a String.

On iOS, setting this property will set the corresponding native property AccessibilityHint on the view. The hint will then be read by Voiceover if Accessibility Hints are turned on in the iPhone.

On Android, setting this property appends the value of the hint to the end of the accessibility label. The upside to this implementation is that it mimics the behavior of hints on iOS, but the downside to this implementation is that these hints cannot be turned off in the settings on Android the way they can be on iOS.

The reason we made this decision on Android is because normally, accessibility hints correspond with a specific action (e.g. click), and we wanted to keep behaviors consistent across platforms.

accessibilityIgnoresInvertColors

We exposed Apple's api AccessibilityIgnoresInvertColors to JavaScript, so now when you have a view where you don't want colors to be inverted (e.g image), you can set this property to true, and it won't be inverted.

These new properties will become available in the React Native 0.57 release.

If you are currently using accessibilityComponentType and accessibilityTraits, here are the steps you can take to upgrade to the new properties.

The most simple use cases can be replaced by running a jscodeshift script.

This script replaces the following instances:

accessibilityTraits=“trait”

accessibilityTraits={[“trait”]}

With

accessibilityRole= “trait”

This script also removes instances of AccessibilityComponentType (assuming everywhere you set AccessibilityComponentType, you would also set AccessibilityTraits).

For the cases that used AccessibilityTraits that don't have a corresponding value for AccessibilityRole, and the cases where multiple traits were passed into AccessibilityTraits, a manual codemod would have to be done.

In general,

accessibilityTraits= {[“button”, “selected”]}

would be manually replaced with

accessibilityRole=“button”

accessibilityStates={[“selected”]}

These properties are already being used in Facebook's codebase. The codemod for Facebook was surprisingly simple. The jscodeshift script fixed about half of our instances, and the other half was fixed manually. Overall, the entire process took less than a few hours.

Hopefully you will find the updated API useful! And please continue making apps accessible! #inclusion

It's been a while since we last published a status update about React Native.

At Facebook, we're using React Native more than ever and for many important projects. One of our most popular products is Marketplace, one of the top-level tabs in our app which is used by 800 million people each month. Since its creation in 2015, all of Marketplace has been built with React Native, including over a hundred full-screen views throughout different parts of the app.

We're also using React Native for many new parts of the app. If you watched the F8 keynote last month, you'll recognize Blood Donations, Crisis Response, Privacy Shortcuts, and Wellness Checks – all recent features built with React Native. And projects outside the main Facebook app are using React Native too. The new Oculus Go VR headset includes a companion mobile app that is fully built with React Native, not to mention React VR powering many experiences in the headset itself.

Naturally, we also use many other technologies to build our apps. Litho and ComponentKit are two libraries we use extensively in our apps; both provide a React-like component API for building native screens. It's never been a goal for React Native to replace all other technologies – we are focused on making React Native itself better, but we love seeing other teams borrow ideas from React Native, like bringing instant reload to non-JavaScript code too.

When we started the React Native project in 2013, we designed it to have a single “bridge” between JavaScript and native that is asynchronous, serializable, and batched. Just as React DOM turns React state updates into imperative, mutative calls to DOM APIs like document.createElement(attrs) and .appendChild(), React Native was designed to return a single JSON message that lists mutations to perform, like [["createView", attrs], ["manageChildren", ...]]. We designed the entire system to never rely on getting a synchronous response back and to ensure everything in that list could be fully serialized to JSON and back. We did this for the flexibility it gave us: on top of this architecture, we were able to build tools like Chrome debugging, which runs all the JavaScript code asynchronously over a WebSocket connection.

Over the last 5 years, we found that these initial principles have made building some features harder. An asynchronous bridge means you can't integrate JavaScript logic directly with many native APIs expecting synchronous answers. A batched bridge that queues native calls means it's harder to have React Native apps call into functions that are implemented natively. And a serializable bridge means unnecessary copying instead of directly sharing memory between the two worlds. For apps that are entirely built in React Native, these restrictions are usually bearable. But for apps with complex integration between React Native and existing app code, they are frustrating.

We're working on a large-scale rearchitecture of React Native to make the framework more flexible and integrate better with native infrastructure in hybrid JavaScript/native apps. With this project, we'll apply what we've learned over the last 5 years and incrementally bring our architecture to a more modern one. We're rewriting many of React Native's internals, but most of the changes are under the hood: existing React Native apps will continue to work with few or no changes.

To make React Native more lightweight and fit better into existing native apps, this rearchitecture has three major internal changes. First, we are changing the threading model. Instead of each UI update needing to perform work on three different threads, it will be possible to call synchronously into JavaScript on any thread for high-priority updates while still keeping low-priority work off the main thread to maintain responsiveness. Second, we are incorporating async rendering capabilities into React Native to allow multiple rendering priorities and to simplify asynchronous data handling. Finally, we are simplifying our bridge to make it faster and more lightweight; direct calls between native and JavaScript are more efficient and will make it easier to build debugging tools like cross-language stack traces.

Once these changes are completed, closer integrations will be possible. Today, it's not possible to incorporate native navigation and gesture handling or native components like UICollectionView and RecyclerView without complex hacks. After our changes to the threading model, building features like this will be straightforward.

We'll release more details about this work later this year as it approaches completion.

Alongside the community inside Facebook, we're happy to have a thriving population of React Native users and collaborators outside Facebook. We'd like to support the React Native community more, both by serving React Native users better and by making the project easier to contribute to.

Just as our architecture changes will help React Native interoperate more cleanly with other native infrastructure, React Native should be slimmer on the JavaScript side to fit better with the JavaScript ecosystem, which includes making the VM and bundler swappable. We know the pace of breaking changes can be hard to keep up with, so we'd like to find ways to have fewer major releases. Finally, we know that some teams are looking for more thorough documentation in topics like startup optimization, where our expertise hasn't yet been written down. Expect to see some of these changes over the coming year.

If you're using React Native, you're part of our community; keep letting us know how we can make React Native better for you.

React Native is just one tool in a mobile developer's toolbox, but it's one that we strongly believe in – and we're making it better every day, with over 2500 commits in the last year from 500+ contributors.

JavaScript! We all love it. But some of us also love types. Luckily, options exist to add stronger types to JavaScript. My favourite is TypeScript, but React Native supports Flow out of the box. Which you prefer is a matter of preference, they each have their own approach on how to add the magic of types to JavaScript. Today, we're going to look at how to use TypeScript in React Native apps.

This post uses Microsoft's TypeScript-React-Native-Starter repo as a guide.

Update: Since this blog post was written, things have gotten even easier. You can replace all the set up described in this blog post by running just one command:

npx react-native init MyAwesomeProject --template react-native-template-typescript

However, there are some limitations to Babel's TypeScript support, which the blog post above goes into in detail. The steps outlined in this post still work, and Artsy is still using react-native-typescript-transformer in production, but the fastest way to get up and running with React Native and TypeScript is using the above command. You can always switch later if you have to.

In any case, have fun! The original blog post continues below.

Because you might be developing on one of several different platforms, targeting several different types of devices, basic setup can be involved. You should first ensure that you can run a plain React Native app without TypeScript. Follow the instructions on the React Native website to get started. When you've managed to deploy to a device or emulator, you'll be ready to start a TypeScript React Native app.

You will also need Node.js, npm, and Yarn.

Once you've tried scaffolding out an ordinary React Native project, you'll be ready to start adding TypeScript. Let's go ahead and do that.

react-native init MyAwesomeProject

cd MyAwesomeProject

The next step is to add TypeScript to your project. The following commands will:

Okay, let's go ahead and run these.

yarn add --dev typescript

yarn add --dev react-native-typescript-transformer

yarn tsc --init --pretty --jsx react

touch rn-cli.config.js

yarn add --dev @types/react @types/react-native

The tsconfig.json file contains all the settings for the TypeScript compiler. The defaults created by the command above are mostly fine, but open the file and uncomment the following line:

{

/* Search the config file for the following line and uncomment it. */

// "allowSyntheticDefaultImports": true, /* Allow default imports from modules with no default export. This does not affect code emit, just typechecking. */

}

The rn-cli.config.js contains the settings for the React Native TypeScript Transformer. Open it and add the following:

module.exports = {

getTransformModulePath() {

return require.resolve('react-native-typescript-transformer');

},

getSourceExts() {

return ['ts', 'tsx'];

},

};

Rename the generated App.js and __tests_/App.js files to App.tsx. index.js needs to use the .js extension. All new files should use the .tsx extension (or .ts if the file doesn't contain any JSX).

If you tried to run the app now, you'd get an error like object prototype may only be an object or null. This is caused by a failure to import the default export from React as well as a named export on the same line. Open App.tsx and modify the import at the top of the file:

-import React, { Component } from 'react';

+import React from 'react'

+import { Component } from 'react';

Some of this has to do with differences in how Babel and TypeScript interoperate with CommonJS modules. In the future, the two will stabilize on the same behaviour.

At this point, you should be able to run the React Native app.

React Native ships with Jest, so for testing a React Native app with TypeScript, we'll want to add ts-jest to our devDependencies.

yarn add --dev ts-jest

Then, we'll open up our package.json and replace the jest field with the following:

{

"jest": {

"preset": "react-native",

"moduleFileExtensions": [

"ts",

"tsx",

"js"

],

"transform": {

"^.+\\.(js)$": "<rootDir>/node_modules/babel-jest",

"\\.(ts|tsx)$": "<rootDir>/node_modules/ts-jest/preprocessor.js"

},

"testRegex": "(/__tests__/.*|\\.(test|spec))\\.(ts|tsx|js)$",

"testPathIgnorePatterns": [

"\\.snap$",

"<rootDir>/node_modules/"

],

"cacheDirectory": ".jest/cache"

}

}

This will configure Jest to run .ts and .tsx files with ts-jest.

To get the best experience in TypeScript, we want the type-checker to understand the shape and API of our dependencies. Some libraries will publish their packages with .d.ts files (type declaration/type definition files), which can describe the shape of the underlying JavaScript. For other libraries, we'll need to explicitly install the appropriate package in the @types/ npm scope.

For example, here we'll need types for Jest, React, and React Native, and React Test Renderer.

yarn add --dev @types/jest @types/react @types/react-native @types/react-test-renderer

We saved these declaration file packages to our dev dependencies because this is a React Native app that only uses these dependencies during development and not during runtime. If we were publishing a library to NPM, we might have to add some of these type dependencies as regular dependencies.

You can read more here about getting .d.ts files.

For your source control, you'll want to start ignoring the .jest folder. If you're using git, we can just add entries to our .gitignore file.

# Jest

#

.jest/

As a checkpoint, consider committing your files into version control.

git init

git add .gitignore # import to do this first, to ignore our files

git add .

git commit -am "Initial commit."

Let's add a component to our app. Let's go ahead and create a Hello.tsx component. It's a pedagogical component, not something that you'd actually write in an app, but something nontrivial that shows off how to use TypeScript in React Native.

Create a components directory and add the following example.

// components/Hello.tsx

import React from 'react';

import {Button, StyleSheet, Text, View} from 'react-native';

export interface Props {

name: string;

enthusiasmLevel?: number;

}

interface State {

enthusiasmLevel: number;

}

export class Hello extends React.Component<Props, State> {

constructor(props: Props) {

super(props);

if ((props.enthusiasmLevel || 0) <= 0) {

throw new Error(

'You could be a little more enthusiastic. :D',

);

}

this.state = {

enthusiasmLevel: props.enthusiasmLevel || 1,

};

}

onIncrement = () =>

this.setState({

enthusiasmLevel: this.state.enthusiasmLevel + 1,

});

onDecrement = () =>

this.setState({

enthusiasmLevel: this.state.enthusiasmLevel - 1,

});

getExclamationMarks = (numChars: number) =>

Array(numChars + 1).join('!');

render() {

return (

<View style={styles.root}>

<Text style={styles.greeting}>

Hello{' '}

{this.props.name +

this.getExclamationMarks(this.state.enthusiasmLevel)}

</Text>

<View style={styles.buttons}>

<View style={styles.button}>

<Button

title="-"

onPress={this.onDecrement}

accessibilityLabel="decrement"

color="red"

/>

</View>

<View style={styles.button}>

<Button

title="+"

onPress={this.onIncrement}

accessibilityLabel="increment"

color="blue"

/>

</View>

</View>

</View>

);

}

}

// styles

const styles = StyleSheet.create({

root: {

alignItems: 'center',

alignSelf: 'center',

},

buttons: {

flexDirection: 'row',

minHeight: 70,

alignItems: 'stretch',

alignSelf: 'center',

borderWidth: 5,

},

button: {

flex: 1,

paddingVertical: 0,

},

greeting: {

color: '#999',

fontWeight: 'bold',

},

});

Whoa! That's a lot, but let's break it down:

div, span, h1, etc., we're rendering components like View and Button. These are native components that work across different platforms.StyleSheet.create function that React Native gives us. React's stylesheets allow us to control our layout using Flexbox, and style using other constructs similar to those in CSS.Now that we've got a component, let's try testing it.

We already have Jest installed as a test runner. We're going to write snapshot tests for our components, let's add the required add-on for snapshot tests:

yarn add --dev react-addons-test-utils

Now let's create a __tests__ folder in the components directory and add a test for Hello.tsx:

// components/__tests__/Hello.tsx

import React from 'react';

import renderer from 'react-test-renderer';

import {Hello} from '../Hello';

it('renders correctly with defaults', () => {

const button = renderer

.create(<Hello name="World" enthusiasmLevel={1} />)

.toJSON();

expect(button).toMatchSnapshot();

});

The first time the test is run, it will create a snapshot of the rendered component and store it in the components/__tests__/__snapshots__/Hello.tsx.snap file. When you modify your component, you'll need to update the snapshots and review the update for inadvertent changes. You can read more about testing React Native components here.

Check out the official React tutorial and state-management library Redux. These resources can be helpful when writing React Native apps. Additionally, you may want to look at ReactXP, a component library written entirely in TypeScript that supports both React on the web as well as React Native.

Have fun in a more type-safe React Native development environment!

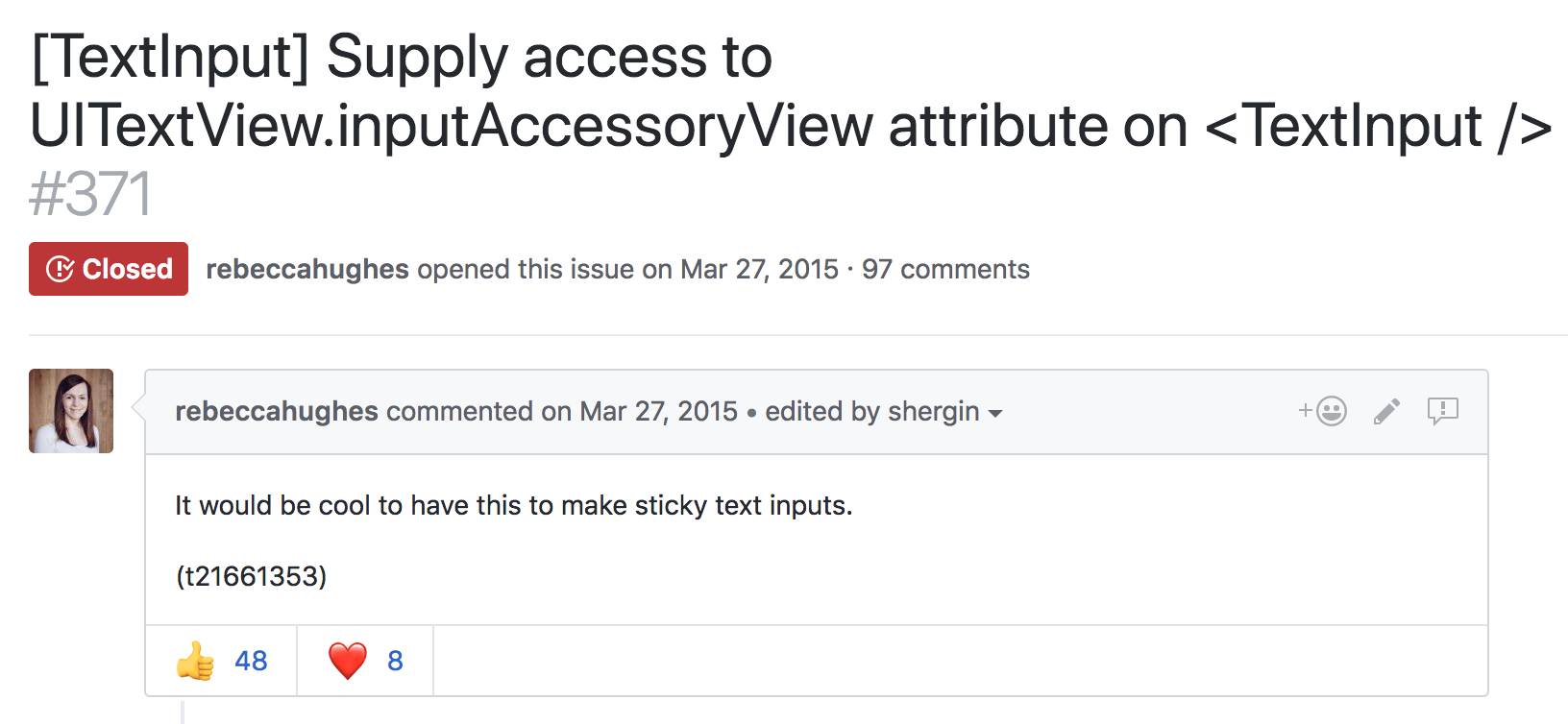

Three years ago, a GitHub issue was opened to support input accessory view from React Native.

In the ensuing years, there have been countless '+1s', various workarounds, and zero concrete changes to RN on this issue - until today. Starting with iOS, we're exposing an API for accessing the native input accessory view and we are excited to share how we built it.

What exactly is an input accessory view? Reading Apple's developer documentation, we learn that it's a custom view which can be anchored to the top of the system keyboard whenever a receiver becomes the first responder. Anything that inherits from UIResponder can redeclare the .inputAccessoryView property as read-write, and manage a custom view here. The responder infrastructure mounts the view, and keeps it in sync with the system keyboard. Gestures which dismiss the keyboard, like a drag or tap, are applied to the input accessory view at the framework level. This allows us to build content with interactive keyboard dismissal, an integral feature in top-tier messaging apps like iMessage and WhatsApp.

There are two common use cases for anchoring a view to the top of the keyboard. The first is creating a keyboard toolbar, like the Facebook composer background picker.

In this scenario, the keyboard is focused on a text input field, and the input accessory view is used to provide additional keyboard functionality. This functionality is contextual to the type of input field. In a mapping application it could be address suggestions, or in a text editor, it could be rich text formatting tools.

The Objective-C UIResponder who owns the <InputAccessoryView> in this scenario should be clear. The <TextInput> has become first responder, and under the hood this becomes an instance of UITextView or UITextField.

The second common scenario is sticky text inputs:

Here, the text input is actually part of the input accessory view itself. This is commonly used in messaging applications, where a message can be composed while scrolling through a thread of previous messages.

Who owns the <InputAccessoryView> in this example? Can it be the UITextView or UITextField again? The text input is inside the input accessory view, this sounds like a circular dependency. Solving this issue alone is another blog post in itself. Spoilers: the owner is a generic UIView subclass who we manually tell to becomeFirstResponder.

We now know what an <InputAccessoryView> is, and how we want to use it. The next step is designing an API that makes sense for both use cases, and works well with existing React Native components like <TextInput>.

For keyboard toolbars, there are a few things we want to consider:

<InputAccessoryView>.<InputAccessoryView> to a particular <TextInput>.<InputAccessoryView> across multiple text inputs, without duplicating any code.We can achieve #1 using a concept similar to React portals. In this design, we portal React Native views to a UIView hierarchy managed by the responder infrastructure. Since React Native views render as UIViews, this is actually quite straightforward - we can just override:

- (void)insertReactSubview:(UIView *)subview atIndex:(NSInteger)atIndex

and pipe all the subviews to a new UIView hierarchy. For #2, we set up a new RCTTouchHandler for the <InputAccessoryView>. State updates are achieved by using regular event callbacks. For #3 and #4, we use the nativeID field to locate the accessory view UIView hierarchy in native code during the creation of a <TextInput> component. This function uses the .inputAccessoryView property of the underlying native text input. Doing this effectively links <InputAccessoryView> to <TextInput> in their ObjC implementations.

Supporting sticky text inputs (scenario 2) adds a few more constraints. For this design, the input accessory view has a text input as a child, so linking via nativeID is not an option. Instead, we set the .inputAccessoryView of a generic off-screen UIView to our native <InputAccessoryView> hierarchy. By manually telling this generic UIView to become first responder, the hierarchy is mounted by responder infrastructure. This concept is explained thoroughly in the aforementioned blog post.

Of course not everything was smooth sailing while building this API. Here are a few pitfalls we encountered, along with how we fixed them.

An initial idea for building this API involved listening to NSNotificationCenter for UIKeyboardWill(Show/Hide/ChangeFrame) events. This pattern is used in some open-sourced libraries, and internally in some parts of the Facebook app. Unfortunately, UIKeyboardDidChangeFrame events were not being called in time to update the <InputAccessoryView> frame on swipes. Also, changes in keyboard height are not captured by these events. This creates a class of bugs that manifest like this:

On iPhone X, text and emoji keyboard are different heights. Most applications using keyboard events to manipulate text input frames had to fix the above bug. Our solution was to commit to using the .inputAccessoryView property, which meant that the responder infrastructure handles frame updates like this.

Another tricky bug we encountered was avoiding the home pill on iPhone X. You may be thinking, “Apple developed safeAreaLayoutGuide for this very reason, this is trivial!”. We were just as naive. The first issue is that the native <InputAccessoryView> implementation has no window to anchor to until the moment it is about to appear. That's alright, we can override -(BOOL)becomeFirstResponder and enforce layout constraints there. Adhering to these constraints bumps the accessory view up, but another bug arises:

The input accessory view successfully avoids the home pill, but now content behind the unsafe area is visible. The solution lies in this radar. I wrapped the native <InputAccessoryView> hierarchy in a container which doesn't conform to the safeAreaLayoutGuide constraints. The native container covers the content in the unsafe area, while the <InputAccessoryView> stays within the safe area boundaries.

Here's an example which builds a keyboard toolbar button to reset <TextInput> state.

class TextInputAccessoryViewExample extends React.Component<

{},

*,

> {

constructor(props) {

super(props);

this.state = {text: 'Placeholder Text'};

}

render() {

const inputAccessoryViewID = 'inputAccessoryView1';

return (

<View>

<TextInput

style={styles.default}

inputAccessoryViewID={inputAccessoryViewID}

onChangeText={text => this.setState({text})}

value={this.state.text}

/>

<InputAccessoryView nativeID={inputAccessoryViewID}>

<View style={{backgroundColor: 'white'}}>

<Button

onPress={() =>

this.setState({text: 'Placeholder Text'})

}

title="Reset Text"

/>

</View>

</InputAccessoryView>

</View>

);

}

}

Another example for Sticky Text Inputs can be found in the repository.

The full commit for this feature implementation is here. <InputAccessoryView> will be available in the upcoming v0.55.0 release.

Happy keyboarding :)

AWS is well known in the technology industry as a provider of cloud services. These include compute, storage, and database technologies, as well as fully managed serverless offerings. The AWS Mobile team has been working closely with customers and members of the JavaScript ecosystem to make cloud-connected mobile and web applications more secure, scalable, and easier to develop and deploy. We began with a complete starter kit, but have a few more recent developments.

This blog post talks about some interesting things for React and React Native developers:

React Native applications are very easy to bootstrap using tools like Create React Native App and Expo. However, connecting them to the cloud can be challenging to navigate when you try to match a use case to infrastructure services. For example, your React Native app might need to upload photos. Should these be protected per user? That probably means you need some sort of registration or sign-in process. Do you want your own user directory or are you using a social media provider? Maybe your app also needs to call an API with custom business logic after users log in.

To help JavaScript developers with these problems, we released a library named AWS Amplify. The design is broken into "categories" of tasks, instead of AWS-specific implementations. For example, if you wanted users to register, log in, and then upload private photos, you would simply pull in Auth and Storage categories to your application:

import { Auth } from 'aws-amplify';

Auth.signIn(username, password)

.then(user => console.log(user))

.catch(err => console.log(err));

Auth.confirmSignIn(user, code)

.then(data => console.log(data))

.catch(err => console.log(err));

In the code above, you can see an example of some of the common tasks that Amplify helps you with, such as using multi-factor authentication (MFA) codes with either email or SMS. The supported categories today are:

One of the nice things about Amplify is that it encodes "best practices" in the design for your specific programming environment. For example, one thing we found working with customers and React Native developers is that shortcuts taken during development to get things working quickly would make it through to production stacks. These can compromise either scalability or security, and force infrastructure rearchitecture and code refactoring.

One example of how we help developers avoid this is the Serverless Reference Architectures with AWS Lambda. These show you best practices around using Amazon API Gateway and AWS Lambda together when building your backend. This pattern is encoded into the API category of Amplify. You can use this pattern to interact with several different REST endpoints, and pass headers all the way through to your Lambda function for custom business logic. We’ve also released an AWS Mobile CLI for bootstrapping new or existing React Native projects with these features. To get started, just install via npm, and follow the configuration prompts:

npm install --global awsmobile-cli

awsmobile configure

Another example of encoded best practices that is specific to the mobile ecosystem is password security. The default Auth category implementation leverages Amazon Cognito user pools for user registration and sign-in. This service implements Secure Remote Password protocol as a way of protecting users during authentication attempts. If you're inclined to read through the mathematics of the protocol, you'll notice that you must use a large prime number when calculating the password verifier over a primitive root to generate a Group. In React Native environments, JIT is disabled. This makes BigInteger calculations for security operations such as this less performant. To account for this, we've released native bridges in Android and iOS that you can link inside your project:

npm install --save aws-amplify-react-native

react-native link amazon-cognito-identity-js

We're also excited to see that the Expo team has included this in their latest SDK so that you can use Amplify without ejecting.

Finally, specific to React Native (and React) development, Amplify contains higher order components (HOCs) for easily wrapping functionality, such as for sign-up and sign-in to your app:

import Amplify, { withAuthenticator } from 'aws-amplify-react-native';

import aws_exports from './aws-exports';

Amplify.configure(aws_exports);

class App extends React.Component {

...

}

export default withAuthenticator(App);

The underlying component is also provided as <Authenticator />, which gives you full control to customize the UI. It also gives you some properties around managing the state of the user, such as if they've signed in or are waiting for MFA confirmation, and callbacks that you can fire when state changes.

Similarly, you'll find general React components that you can use for different use cases. You can customize these to your needs, for example, to show all private images from Amazon S3 in the Storage module:

<S3Album

level="private"

path={path}

filter={(item) => /jpg/i.test(item.path)}/>

You can control many of the component features via props, as shown earlier, with public or private storage options. There are even capabilities to automatically gather analytics when users interact with certain UI components:

return <S3Album track/>

AWS Amplify favors a convention over configuration style of development, with a global initialization routine or initialization at the category level. The quickest way to get started is with an aws-exports file. However, developers can also use the library independently with existing resources.

For a deep dive into the philosophy and to see a full demo, check out the video from AWS re:Invent.

Shortly after the launch of AWS Amplify, we also released AWS AppSync. This is a fully managed GraphQL service that has both offline and real-time capabilities. Although you can use GraphQL in different client programming languages (including native Android and iOS), it's quite popular among React Native developers. This is because the data model fits nicely into a unidirectional data flow and component hierarchy.

AWS AppSync enables you to connect to resources in your own AWS account, meaning you own and control your data. This is done by using data sources, and the service supports Amazon DynamoDB, Amazon Elasticsearch, and AWS Lambda. This enables you to combine functionality (such as NoSQL and full-text search) in a single GraphQL API as a schema. This enables you to mix and match data sources. The AppSync service can also provision from a schema, so if you aren't familiar with AWS services, you can write GraphQL SDL, click a button, and you're automatically up and running.

The real-time functionality in AWS AppSync is controlled via GraphQL subscriptions with a well-known, event-based pattern. Because subscriptions in AWS AppSync are controlled on the schema with a GraphQL directive, and a schema can use any data source, this means you can trigger notifications from database operations with Amazon DynamoDB and Amazon Elasticsearch Service, or from other parts of your infrastructure with AWS Lambda.

In a way similar to AWS Amplify, you can use enterprise security features on your GraphQL API with AWS AppSync. The service lets you get started quickly with API keys. However, as you roll to production it can transition to using AWS Identity and Access Management (IAM) or OIDC tokens from Amazon Cognito user pools. You can control access at the resolver level with policies on types. You can even use logical checks for fine-grained access control checks at run time, such as detecting if a user is the owner of a specific database resource. There are also capabilities around checking group membership for executing resolvers or individual database record access.

To help React Native developers learn more about these technologies, there is a built-in GraphQL sample schema that you can launch on the AWS AppSync console homepage. This sample deploys a GraphQL schema, provisions database tables, and connects queries, mutations, and subscriptions automatically for you. There is also a functioning React Native example for AWS AppSync which leverages this built in schema (as well as a React example), which enable you to get both your client and cloud components running in minutes.

Getting started is simple when you use the AWSAppSyncClient, which plugs in to the Apollo Client. The AWSAppSyncClient handles security and signing for your GraphQL API, offline functionality, and the subscription handshake and negotiation process:

import AWSAppSyncClient from "aws-appsync";

import { Rehydrated } from 'aws-appsync-react';

import { AUTH_TYPE } from "aws-appsync/lib/link/auth-link";

const client = new AWSAppSyncClient({

url: awsconfig.graphqlEndpoint,

region: awsconfig.region,

auth: {type: AUTH_TYPE.API_KEY, apiKey: awsconfig.apiKey}

});

The AppSync console provides a configuration file for download, which contains your GraphQL endpoint, AWS Region, and API key. You can then use the client with React Apollo:

const WithProvider = () => (

<ApolloProvider client={client}>

<Rehydrated>

<App />

</Rehydrated>

</ApolloProvider>

);

At this point, you can use standard GraphQL queries:

query ListEvents {

listEvents{

items{

__typename

id

name

where

when

description

comments{

__typename

items{

__typename

eventId

commentId

content

createdAt

}

nextToken

}

}

}

}

The example above shows a query with the sample app schema provisioned by AppSync. It not only showcases interaction with DynamoDB, but also includes pagination of data (including encrypted tokens) and type relations between Events and Comments. Because the app is configured with the AWSAppSyncClient, data is automatically persisted offline and will synchronize when devices reconnect.

You can see a deep dive of the client technology behind this and a React Native demo in this video.

The team behind the libraries is eager to hear how these libraries and services work for you. They also want to hear what else we can do to make React and React Native development with cloud services easier for you. Reach out to the AWS Mobile team on GitHub for AWS Amplify or AWS AppSync.

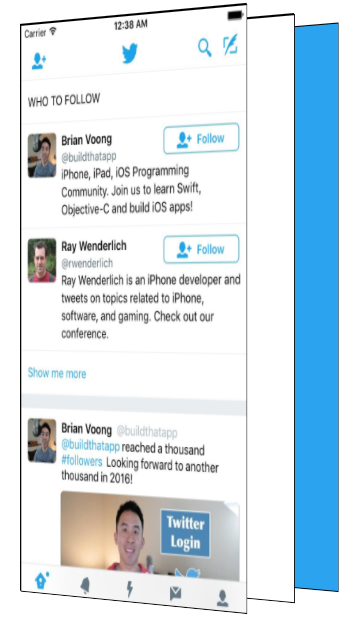

Twitter’s iOS app has a loading animation I quite enjoy.

Once the app is ready, the Twitter logo delightfully expands, revealing the app.

I wanted to figure out how to recreate this loading animation with React Native.

To understand how to build it, I first had to understand the difference pieces of the loading animation. The easiest way to see the subtlety is to slow it down.

There are a few major pieces in this that we will need to figure out how to build.

It took me quite a while to figure out how to make this animation.

I started with an incorrect assumption that the blue background and Twitter bird were a layer on top of the app and that as the bird grew, it became transparent which revealed the app underneath. This approach doesn’t work because the Twitter bird becoming transparent would show the blue layer, not the app underneath!

Luckily for you, dear reader, you don’t have to go through the same frustration I did. You get this nice tutorial skipping to the good stuff!

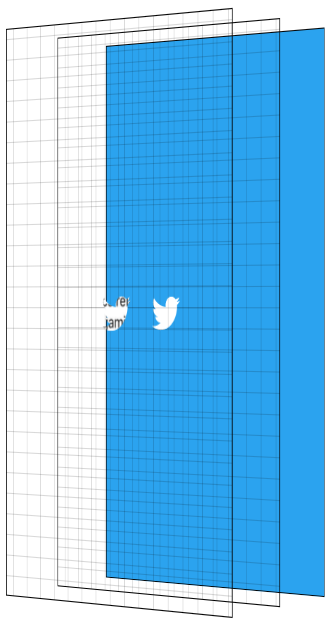

Before we get to code, it is important to understand how to break this down. To help visualize this effect, I recreated it in CodePen (embedded in a few paragraphs) so you can interactively see the different layers.

There are three main layers to this effect. The first is the blue background layer. Even though this seems to appear on top of the app, it is actually in the back.

We then have a plain white layer. And then lastly, in the very front, is our app.

The main trick to this animation is using the Twitter logo as a mask and masking both the app, and the white layer. I won’t go too deep on the details of masking, there are plenty of resources online for that.

The basics of masking in this context are having images where opaque pixels of the mask show the content they are masking whereas transparent pixels of the mask hide the content they are masking.

We use the Twitter logo as a mask, and having it mask two layers; the solid white layer, and the app layer.

To reveal the app, we scale the mask up until it is larger than the entire screen.

While the mask is scaling up, we fade in the opacity of the app layer, showing the app and hiding the solid white layer behind it. To finish the effect, we start the app layer at a scale > 1, and scale it down to 1 as the animation is ending. We then hide the non-app layers as they will never be seen again.

They say a picture is worth 1,000 words. How many words is an interactive visualization worth? Click through the animation with the “Next Step” button. Showing the layers gives you a side view perspective. The grid is there to help visualize the transparent layers.

Alrighty. Now that we know what we are building and how the animation works, we can get down to the code — the reason you are really here.

The main piece of this puzzle is MaskedViewIOS, a core React Native component.

import {MaskedViewIOS} from 'react-native';

<MaskedViewIOS maskElement={<Text>Basic Mask</Text>}>

<View style={{backgroundColor: 'blue'}} />

</MaskedViewIOS>;

MaskedViewIOS takes props maskElement and children. The children are masked by the maskElement. Note that the mask doesn’t need to be an image, it can be any arbitrary view. The behavior of the above example would be to render the blue view, but for it to be visible only where the words “Basic Mask” are from the maskElement. We just made complicated blue text.

What we want to do is render our blue layer, and then on top render our masked app and white layers with the Twitter logo.

{

fullScreenBlueLayer;

}

<MaskedViewIOS

style={{flex: 1}}

maskElement={

<View style={styles.centeredFullScreen}>

<Image source={twitterLogo} />

</View>

}>

{fullScreenWhiteLayer}

<View style={{flex: 1}}>

<MyApp />

</View>

</MaskedViewIOS>;

This will give us the layers we see below.

We have all the pieces we need to make this work, the next step is animating them. To make this animation feel good, we will be utilizing React Native’s Animated API.

Animated lets us define our animations declaratively in JavaScript. By default, these animations run in JavaScript and tell the native layer what changes to make on every frame. Even though JavaScript will try to update the animation every frame, it will likely not be able to do that fast enough and will cause dropped frames (jank) to occur. Not what we want!

Animated has special behavior to allow you to get animations without this jank. Animated has a flag called useNativeDriver which sends your animation definition from JavaScript to native at the beginning of your animation, allowing the native side to process the updates to your animation without having to go back and forth to JavaScript every frame. The downside of useNativeDriver is you can only update a specific set of properties, mostly transform and opacity. You can’t animate things like background color with useNativeDriver, at least not yet — we will add more over time, and of course you can always submit a PR for properties you need for your project, benefitting the whole community 😀.

Since we want this animation to be smooth, we will work within these constraints. For a more in depth look at how useNativeDriver works under the hood, check out our blog post announcing it.

There are 4 components to our animation:

With Animated, there are two main ways to define your animation. The first is by using Animated.timing which lets you say exactly how long your animation will run for, along with an easing curve to smooth out the motion. The other approach is by using the physics based apis such as Animated.spring. With Animated.spring, you specify parameters like the amount of friction and tension in the spring, and let physics run your animation.

We have multiple animations we want to be running at the same time which are all closely related to each other. For example, we want the app to start fading in while the mask is mid-reveal. Because these animations are closely related, we will use Animated.timing with a single Animated.Value.

Animated.Value is a wrapper around a native value that Animated uses to know the state of an animation. You typically want to only have one of these for a complete animation. Most components that use Animated will store the value in state.

Since I’m thinking about this animation as steps occurring at different points in time along the complete animation, we will start our Animated.Value at 0, representing 0% complete, and end our value at 100, representing 100% complete.

Our initial component state will be the following.

state = {

loadingProgress: new Animated.Value(0),

};

When we are ready to begin the animation, we tell Animated to animate this value to 100.

Animated.timing(this.state.loadingProgress, {

toValue: 100,

duration: 1000,

useNativeDriver: true, // This is important!

}).start();

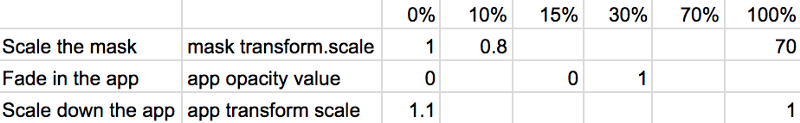

I then try to figure out a rough estimate of the different pieces of the animations and the values I want them to have at different stages of the overall animation. Below is a table of the different pieces of the animation, and what I think their values should be at different points as we progress through time.

The Twitter bird mask should start at scale 1, and it gets smaller before it shoots up in size. So at 10% through the animation, it should have a scale value of .8 before shooting up to scale 70 at the end. Picking 70 was pretty arbitrary to be honest, it needed to be large enough that the bird fully revealed the screen and 60 wasn’t big enough 😀. Something interesting about this part though is that the higher the number, the faster it will look like it is growing because it has to get there in the same amount of time. This number took some trial and error to make look good with this logo. Logos / devices of different sizes will require this end-scale to be different to ensure the entire screen is revealed.

The app should stay opaque for a while, at least through the Twitter logo getting smaller. Based on the official animation, I want to start showing it when the bird is mid way through scaling it up and to fully reveal it pretty quickly. So at 15% we start showing it, and at 30% through the overall animation it is fully visible.

The app scale starts at 1.1 and scales down to its regular scale by the end of the animation.

What we essentially did above is map the values from the animation progress percentage to the values for the individual pieces. We do that with Animated using .interpolate. We create 3 different style objects, one for each piece of the animation, using interpolated values based off of this.state.loadingProgress.

const loadingProgress = this.state.loadingProgress;

const opacityClearToVisible = {

opacity: loadingProgress.interpolate({

inputRange: [0, 15, 30],

outputRange: [0, 0, 1],

extrapolate: 'clamp',

// clamp means when the input is 30-100, output should stay at 1

}),

};

const imageScale = {

transform: [

{

scale: loadingProgress.interpolate({

inputRange: [0, 10, 100],

outputRange: [1, 0.8, 70],

}),

},

],

};

const appScale = {

transform: [

{

scale: loadingProgress.interpolate({

inputRange: [0, 100],

outputRange: [1.1, 1],

}),

},

],

};

Now that we have these style objects, we can use them when rendering the snippet of the view from earlier in the post. Note that only Animated.View, Animated.Text, and Animated.Image are able to use style objects that use Animated.Value.

const fullScreenBlueLayer = (

<View style={styles.fullScreenBlueLayer} />

);

const fullScreenWhiteLayer = (

<View style={styles.fullScreenWhiteLayer} />

);

return (

<View style={styles.fullScreen}>

{fullScreenBlueLayer}

<MaskedViewIOS

style={{flex: 1}}

maskElement={

<View style={styles.centeredFullScreen}>

<Animated.Image

style={[styles.maskImageStyle, imageScale]}

source={twitterLogo}

/>

</View>

}>

{fullScreenWhiteLayer}

<Animated.View

style={[opacityClearToVisible, appScale, {flex: 1}]}>

{this.props.children}

</Animated.View>

</MaskedViewIOS>

</View>

);

Yay! We now have the animation pieces looking like we want. Now we just have to clean up our blue and white layers which will never be seen again.

To know when we can clean them up, we need to know when the animation is complete. Luckily where we call, Animated.timing ,.start takes an optional callback that runs when the animation is complete.

Animated.timing(this.state.loadingProgress, {

toValue: 100,

duration: 1000,

useNativeDriver: true,

}).start(() => {

this.setState({

animationDone: true,

});

});

Now that we have a value in state to know whether we are done with the animation, we can modify our blue and white layers to use that.

const fullScreenBlueLayer = this.state.animationDone ? null : (

<View style={[styles.fullScreenBlueLayer]} />

);

const fullScreenWhiteLayer = this.state.animationDone ? null : (

<View style={[styles.fullScreenWhiteLayer]} />

);

Voila! Our animation now works and we clean up our unused layers once the animation is done. We have built the Twitter app loading animation!

Don’t fret, dear reader. I too hate when guides only give you chunks of the code and don’t give you the completed source.

This component has been published to npm and is on GitHub as react-native-mask-loader. To try this out on your phone, it is available on Expo here:

The React Native monthly meeting is still going strong! Make sure to check a note on the bottom of this post for the next sessions.

react-native-gesture-handler and we’re really excited about some of the improvements we have planned. If you're looking to contribute to the community, check out react-native-maps and react-native-svg, which could both use some help!There's been some discussion on re-purposing this meeting to discuss a single and specific topic (e.g. navigation, moving React Native modules into separate repos, documentation, ...). That way we feel we can contribute the best to React Native community. It might take place in the next meeting session. Feel free to tweet what you'd like to see covered as a topic.

The React Native monthly meeting continues! Let's see what our teams are up to.

I18nManager.swapLeftAndRightInRTL(false) in your React Native components to opt into them.The next session is scheduled for Wednesday 6, December 2017. Feel free to ping me on Twitter if you have any suggestion on how we should improve the output of the meeting.